Quick Look: “Dealer” by John Martyn (1977)

My goodness, it has been a crazy couple of months in my extra-blog life, and there is not much indication that things are going to quiet down anytime soon. The promised post about the brave anti-terror dog is indeed in the works; in the meantime, please enjoy the first—okay, let’s call it the second—in a new series of brief (yes!) posts that will pretty much attempt to say only one thing ABOUT only one thing. There’s a ton of distance between an 8,000-word commando raid on a pop hit and “Martin shared a link on your wall,” right? And SOME of that territory’s gotta be worth checking out.

Thus, please enjoy with my compliments the following:

Here is a fruit fallen from a rather peculiar branch of the pop-music tree: John Martyn in 1977, performing “Dealer,” the first track from his soon-to-be-released LP One World. Martyn began his career as an English folk and blues artist in the mold of Davey Graham, but later moved away from the clear diction and crisp acoustics of traditional folk in the direction of jazz and dub; the One World studio sessions followed a transformative encounter with the justly fabled Lee “Scratch” Perry and a brief interlude as a session player in Jamaica. While Martyn had been using Echoplex tape delay for years as an occasional component of his sound, by the mid-70s it had become central to his live performances, helping to yield the constantly-accreting cascade of notes we hear in much of his output from this period. His experiences in Jamaica, we can imagine, suggested even broader avenues for exploration: I think the subtractive logic of dub is pretty apparent, for instance, in “Small Hours,” the last track on One World, where the Echoplex and a volume pedal serve to elide the sound of Martyn’s attack on his strings, thereby separating the guitar’s sound from its source. (I think this solo performance—from Reading University in 1978—is extraordinary, and with all due respect to Steve Winwood’s Moog noodling, I prefer it to the album version.)

While creatively fertile, 1977 was a dark time for John Martyn personally, and it was about to get darker: by the end of the decade he’d be divorced, and his already pronounced proclivity for alcohol and substance use and abuse would rapidly expand and escalate. In retrospect, “Dealer” comes off a little like the view from the apex of the rollercoaster: the last clear glimpse of where things are going and what’s about to happen.

What strikes me as most remarkable about “Dealer” is how it can’t or won’t settle on how literally it’s meant to be about a vendor of narcotics. If it’s not literal, then what is it a metaphor for, exactly? I’ll bet you can think of several answers, and I’ll bet they’re all correct. A slightly weaker but fascinating performance of the same song from a year later—the coaster now on its way down, picking up speed—makes it clear that the indictment that “Dealer” intends to hand down is pretty broad: Martyn is contemptuous toward his audience, almost combative, and the audience seems amused by this. It’s clear that the encounter is tainted by bad faith, but it’s difficult, maybe impossible, to determine who the sucker is, who’s taking advantage of whom.

I think the second verse of “Dealer”—shifted to third in the 1978 performance, with a few pronouns tellingly shuffled—is particularly sharp and true: a corrosive blast of self- and other-loathing aimed at anyone who earns a living selling a product that people want, but don’t need. That, needless to say, implicates popular music, and implicates Martyn himself:

They tell me that they dig my shit

so I sell it to them cheap.

They bring their scales and check the deal,

’cos they’re scared that I might cheat.

Well I’m just a spit and polish

on a fat man’s shiny shoe.

Well I think I hate them for it

and I think they hate me too.

Oh Abbottabad we are leaving you now

Okay, so . . . Osama bin Laden. Not gonna miss the dude, frankly.

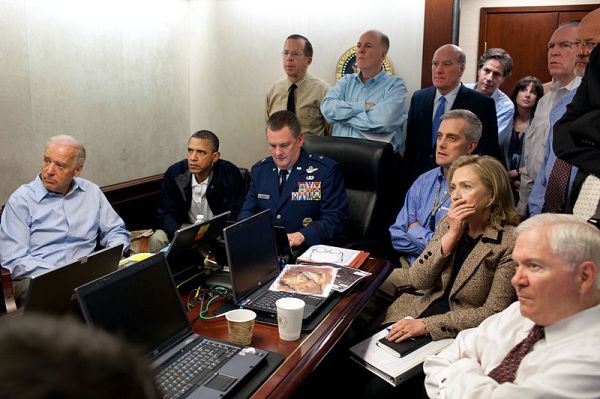

It’s been a little over two months now since Bin Laden got himself assassinated by U.S. Navy SEALs in Abbottabad, Pakistan. My spouse and I made it an early night on Sunday, May 1, and as such we were unaware until the following morning that we’d been sleeping in a post-Osama world.

I didn’t chart my reaction to the news very rigorously. I remember being a little surprised at exactly where the guy had turned up (nice neighborhood!) and otherwise just sort of generally relieved—relieved less that Bin Laden was no longer a threat than that the raid that killed him wasn’t a total fiasco, as it might well have been. Mine was not a put-on-an-American-flag-cape-and-climb-up-a-tree type of reaction, or even a woohoo-Facebook-status-update kind of deal. I felt neither more nor less safe, neither more nor less “confident in the direction of the country” as the pollsters like to say. I guess I’d characterize myself as satisfied.

Now, I don’t like to think of myself as someone who happily receives news of extrajudicial killings paid for by my tax dollars . . . but there you have it, gang. The best argument I can offer in my defense is the hope that Bin Laden’s assassination has marked the beginning of the end of a very bad time—not only the military engagement in Afghanistan, but an entire decade of U.S. foreign policy conducted in the manner of the wounded Polyphemus, blinded and drunk. If our only post-9/11 retributive options were 1) to invade and occupy two sizable Asian countries—one of which had absolutely nothing to do with the 2001 attacks—with nearly half a million coalition troops and 2) to assassinate a bunch of suspicious individuals via special-forces hit squad and flying robot, then I’ll take Option Two, thanks. (I hope you don’t need me to point out that, strictly speaking, these were NOT our only two options.) Taken together, two recent policy statements—one by President Obama regarding a post-Bin-Laden troop drawdown in Afghanistan, and one by homeland-security wonk John O. Brennan announcing Obama’s hip new counterterrorism strategy—clearly indicate that this is how our nation’s dirty business will be conducted in the future . . . which is how we used to say “going forward.” (In a small masterpiece of grammatical hedging, Brennan’s speech promises that the administration “will be mindful that if our nation is threatened, our best offense won’t always [!] be deploying large armies abroad but delivering targeted, surgical pressure to the groups that threaten us.”)

While the success of “Operation Neptune Spear”—I’m just gonna go ahead and [sic] that—has no doubt inspired the institutions charged with counterterrorism and counterinsurgency to try stuff like this even more often, it has also removed a major justification for such covert programs. Additionally, unlike the other approximately 12,000 extrajudicial assassinations and arrests carried out by the United States during the past calendar year—and who knows how many more in the course of our nation’s mostly unspoken-of history—this one got a ton of press, which helps to make the practice visible as a policy and a strategy, instead of just as a thing that happens. If this analysis strikes you as rather disappointingly glib and pragmatic, well, it strikes me that way too. What can I say? Sometimes the only way out is through.

Many people, it seems, had rather different and more emphatic reactions than I did to the news of Bin Laden’s death. A significant number evidently celebrated the event as they might have a local team’s championship victory, i.e. by dancing and cheering in public spaces, cracking open beers, and/or having sex. Another significant group observed the occasion by commenting on the unseemliness of the celebrations of the first group, and suggesting that this behavior was at best an unwise way for us to present ourselves to the world, at worst an indication of a damning flaw in the American character, or even in the human character. (Among the best of these latter folks was Mike Meginnis, blogging at the journal Uncanny Valley, who connected the desire to celebrate Bin Laden’s death with kitsch; I have some quibbles with this connection . . . but I’ll get to that.) At the time, I didn’t share any of these sentiments or concerns. This is maybe a little embarrassing, but the three major reactions I can recall having had while reading the news in the days following Bin Laden’s killing were these:

1) Abbottabad seems like a weird name for a city in Pakistan.

2) OMG, the race is like SO ON to be the first person to write a horrid jingoistic children’s book about the brave anti-terror dog that took part in the raid!

3) Geronimo? Seriously? We’re really going there?

Embarrassing or not, I’d like to spend a little more time over the next few weeks kicking around all three of these reactions. Thus, I bring you part one of three: Abbottabad.

Seems like a really nice place: mild weather, picturesque hills, etc. Based on some very rough projections from available demographic data, I’m imagining it as about the size of Pittsburgh. It was evidently a stop on the Silk Road—or one of the Silk Roads, at any rate—and, as we all know by now, it’s presently the site of “Pakistan’s West Point.”

Behind the weird name, there is indeed a story. The city was established in 1853 by Major James Abbott, following the annexation of the Punjab by the British East India Company in 1849. (This episode of global history is widely known, of course, but not commented on as often as it ought to be, so I’ll spell that out: for about a hundred years, from the mid-Eighteenth to the mid-Nineteenth Century, most of the Indian Subcontinent was controlled by a corporation.) Major Abbott—later General Sir Abbott, Knight Commander, Order of the Bath—was an English soldier, secret agent, administrator, adventurer, and writer, described by his superior Henry Lawrence (quoted in a charming obituary pasted into a copy of Abbott’s best-known work and inadvertently scanned by Google) as

made of the stuff of the true knight-errant, gentle as a girl in thought, word, and deed, overflowing with warm affection, and ready at all times to sacrifice himself for his country or his friend. He is at the same time a brave, scientific, and energetic soldier, with a peculiar power of attracting others, especially Asiatics, to his person.

Abbott was one of Lawrence’s “young men,” a group of British East India Company operatives sent as “advisors” to the Sikh Empire after the First Anglo-Sikh War, essentially to gather intelligence and to keep the Punjab pacified; he was instrumental in enabling the eventual British annexation of India’s Northwest Frontier. Earlier in his career, Abbott had travelled throughout Central Asia—to Uzbekistan, Kyrgyzstan, Afghanistan, and Russia—intriguing with and against agents of the Russian Empire as a participant in what came to be known popularly (thanks to Rudyard Kipling) as The Great Game.

The Great Game is one of those fun episodes that seems to presage an improbably large portion of the history that followed it. Like the Cold War, it presents the spectacle of two global powers doing ostensible battle—mostly through proxies, by means of exacerbating and exploiting ethnic and religious conflicts—in theaters of war that neither calls home. (Also like the Cold War, it looks in retrospect less like two nations fighting each other than like two empires bent on devouring the rest of the world, competing to exploit its resources more quickly and efficiently.) In the Great Game, the field of play was Central Asia, particularly Afghanistan—a region which of course came to feature prominently in late episodes of the Cold War, as well as in more recent events. The Great Game also seems to have foreshadowed other more abstract conflicts: that of megacorporations versus nation-states, for instance, and that of Western neoliberalism versus Islamism and tribalism. Even the phrase “The Great Game” has displayed an increasing propensity to slip the bonds of specific historical circumstance and become general verbal shorthand for covert action on a global scale.

Therefore, it’s not much of a stretch to suggest that James Abbott is among the very few guys with a plausible claim on having definitively steered the course of world history. Did I mention he was also a poet? He totally was! Check out this little gem, composed in 1853, on the occasion of the author’s departure from the outpost that had come to bear his name:

ABBOTTABAD

I remember the day when I first came here

And smelt the sweet Abbottabad air

The trees and ground covered with snow

Gave us indeed a brilliant show

To me the place seemed like a dream

And far ran a lonesome stream

The wind hissed as if welcoming us

The pine swayed creating a lot of fuss

And the tiny cuckoo sang it away

A song very melodious and gay

I adored the place from the first sight

And was happy that my coming here was right

And eight good years here passed very soon

And we leave you perhaps on a sunny noon

Oh Abbottabad we are leaving you now

To your natural beauty do I bow

Perhaps your winds [sic] sound will never reach my ear

My gift for you is a few sad tears

I bid you farewell with a heavy heart

Never from my mind will your memories thwart

One of the things you may have noticed about this poem is that it COMPLETELY sucks: metrics sloppy, syntax twisted to force clunky rhymes, punctuation absent, words repeated carelessly—and then there’s the whole logical fallacy of the two opening lines, because, dude, the place is NAMED AFTER YOU, so it can’t have been called “Abbottabad” when you first . . . oh, never mind.

A catalogue of this poem’s technical shortcomings, however, does not fully—or even mostly—explain why it’s such a piece of crap. It’s not only badly executed, but also badly conceived: bereft of any particularizing detail about either the departing speaker’s circumstances or the place he’s leaving, this is pretty much the most generic farewell poem imaginable. It could be applied to just about anybody leaving any nonurban locale anywhere between the subtropics and the Arctic and Antarctic Circles. It’s entirely possible that Abbott wrote these lines while overcome with genuine sorrow at leaving his namesake cantonment; it seems more likely that he just figured the occasion would benefit from some verse. But neither of these motives—not sincere emotion, nor social necessity—in itself provides sufficient material for writing a halfway decent poem.

Believe it or not, this IS going to have something to do with the death of Osama bin Laden.

I should probably historicize my critique a little: Abbott’s literary missteps probably seem more blatant to a modern reader than they would have back in the day. Among the courses my spouse currently teaches are surveys in reading poetry; she’s recently added “Abbottabad” to a list of really lame poems she uses to explain why syllabi tend to pass over certain eras in silence and haste—and also to demonstrate what cosmopolitan Anglophone modernist poets like Stein, Pound, and Eliot would later be writing in opposition to. In 1853, the British Empire was conspicuously light on rigorous and effective poets: Tennyson’s freedom had been compromised by his hiring-on as Poet Laureate, Elizabeth Barrett Browning’s perceived sphere of authority was constrained by her gender, nobody was yet paying much attention to Robert Browning or Matthew Arnold, and William Wordsworth—Tennyson’s Poet Laureate predecessor—was three years in the grave.

The evil that men do lives after them, and Wordsworth may actually be the key figure in explaining why Abbott’s cultured contemporaries might have accepted “Abbottabad” as being worth even the teensiest, weensiest damn. As you probably know, Wordsworth and his much cooler buddy Samuel Taylor Coleridge burst onto the scene in 1798 with a collection of poems called Lyrical Ballads, which set out (according to Wordsworth’s famous preface to the 1802 edition),

to chuse incidents and situations from common life, and to relate or describe them [. . .] in a selection of language really used by men; [. . .] to throw over them a certain colouring of imagination, whereby ordinary things should be presented to the mind in an unusual way; and, further, and above all, to make these incidents and situations interesting by tracing in them [. . .] the primary laws of our nature: chiefly, as far as regards the manner in which we associate ideas in a state of excitement.

Wordsworth’s and Coleridge’s aim was to make English poetry—which in their not-unjustified view had grown elitist, stylized, calcified, and smug—accessible to and conversant with the experience of common folks. Which, fine: this needed doing. But their prescriptions—which elevated forthrightness over wit, the individual over society, simplicity over complexity, and emotion over technique—have proved to possess some unpleasant side effects.

Coleridge’s work often tended toward the bizarre and sensational—cursed wandering sailors, druggy Orientalist fantasias, hot lesbian vampires—and often sought to achieve psychological insight by way of freaky supernatural dread. On the whole it looks rather sillier than Wordsworth’s output does, but also seems to have worn better over time—maybe because it doesn’t purport to be rooted in anybody’s authentic embodied experience, and therefore doesn’t overstep its authority. (Coleridge, who coined the phrase “willing suspension of disbelief,” is a total whiz on how writing goes about earning authority over readers.) Rereading Wordsworth’s preface—which explains that the poems in Lyrical Ballads take “low and rustic life” as their subject and as the source of their language because “in that condition of life our elementary feelings co-exist in a state of greater simplicity” and “the passions of men are incorporated with the beautiful and permanent forms of nature”—I am struck by how closely his arguments match the uncritical assertions of a particularly bad-news brand of populist conservatism: both maintain that passion is more trustworthy than erudition, that country folk live simpler lives than city folk do (and therefore have a better claim on moral and philosophical clarity), and that human character proceeds directly from nature (and is therefore always essentially the same, once removed from the perversions of culture).

Assertions like these HAVE to be made uncritically, of course, because they have no basis in fact, and can’t survive objective scrutiny. Though he calls Lyrical Ballads an “experiment” in his preface, Wordsworth’s project isn’t rigorous, and the extent to which he himself buys into what he’s peddling isn’t clear: when he wrote it, he was ostensibly a political radical cheering on the French Revolution and opposing urbanization, industrialization, and the monarchy; in less than a decade, however, he would become an avowed reactionary nationalist—a role he’d inhabit plausibly enough to be named Poet Laureate in 1843. (Cynical and/or fatuous contempt for consistency and logic is another quality I can’t help but associate with populist conservatism.)

The problem here, I think, is pretty obvious: Wordsworth’s flattering conception of the agricultural class—sincere though it may have been—these days comes off as presumptuous, self-serving, and disrespectful. And here’s the thing: this flaw didn’t make Lyrical Ballads any less popular or influential. In fact, it made it MORE influential—and more useful, at least in certain quarters—by suggesting and legitimating an approach to verse that was easy to write, easy to read, and easy to digest. I have no doubt that Wordsworth genuinely sought a way to jolt English poetry from its sclerotic state; unfortunately, replacing high-flown versification with plain language just resulted in the establishment of a new standard poetic diction, folksier in tone but no less amenable to vacuity. Wordsworth’s goal of presenting “ordinary things” in “an unusual way” is totally solid: this is where just about all avants-gardes start, with a desire to wake people up and make them critically aware of their situations. But almost right away, we find ourselves in trouble again. Dig:

1) Almost by definition, cultural apparatus that propagate works of art do not share that art’s aim of disrupting the status quo; in fact, they always depend to some degree on the status quo, in sort of the same way that the pharmaceutical industry depends on sick people.

2) One of the cultural apparatus’ favorite tricks—one that’s performed automatically, without anybody having to think about it—is to defuse radical works of art by promoting other works that are imitative of them: superficially similar, but less overtly challenging. This imitation has the effect of making the derivative works seem novel and cutting-edge—owing to their resemblance to the uncompromised original—while at the same time being far more accessible to a casual audience. Furthermore—and this is the best part—the success of the imitative works has the added effect of making the original work of art easier for that same casual audience to consume with comfort: instead of being received as a confounding and alienating indictment of that audience’s entire way of life and system of values, it can now be understood as a thing that’s, y’know, kind of like those other things. (The Situationist International called this trick recuperation.)

The real problem with English poetry in the Nineteenth Century—and maybe with all art, in every century—wasn’t the calcification of its rhetoric, exactly. Rather, it was the powerful tendency of dominant culture to refresh itself by devouring and digesting every work of art produced in opposition to it, and regurgitating that art as something that actually reinforces it. Therefore any renewal based solely on updating language can at best be a temporary fix. Wordsworth’s principled objections to the culture of his time led him toward certain subjects and gestures; these subjects and gestures got imitated and standardized as techniques; then, once readers learned to spot the techniques, they used them to define—and effectively to defang—a genre: English Romantic Poetry.

So that’s the big picture. The practical effect of this phenomenon was that after Romanticism reintroduced earnestness and emotional directness to English poetry, that poetry started to become sentimental. What I mean by “sentimental” is pretty much what Oscar Wilde meant in De Profundis—though the context of Wilde’s remarks was significant, and very personal. “A sentimentalist,” wrote the imprisoned Wilde to his erstwhile lover Bosie Douglas,

is simply one who desires to have the luxury of an emotion without paying for it. [. . .] You think that one can have one’s emotions for nothing. One cannot. Even the finest and most self-satisfying emotions have to be paid for. Strangely enough, that is what makes them fine. The intellectual and emotional life of ordinary people is a very contemptible affair. Just as they borrow their ideas from a sort of circulating library of thought—the Zeitgeist of an age that has no soul—and send them back soiled at the end of each week, so they always try to get their emotions on credit, and refuse to pay the bill when it comes in. [. . .] And remember that the sentimentalist is always a cynic at heart. Indeed, sentimentality is merely the bank holiday of cynicism. And delightful as cynicism is from its intellectual side, now that it has left the Tub for the Club, it never can be more than the perfect philosophy for a man who has no soul. It has its social value, and to an artist all modes of expression are interesting, but in itself it is a poor affair, for to the true cynic nothing is ever revealed.

The key thing to get here is that sentimentality of the kind that Wilde deplores is a) borrowed and b) unearned. Rather than being rooted in an individual’s response to a particular situation—whether depicted or experienced first-hand—sentimentality involves a response that’s rehearsed and performed. Instead of requiring any close attention to or sympathetic understanding of the specific circumstances, sentimentality provides a canned social script that efficiently circumvents attention and understanding while reassuring us that we are indeed attentive, understanding people; i.e. we convince ourselves that we’ve responded sensitively when in fact we’ve ignored the circumstances in favor of focusing on our own capacity for, and facility with, emotion.

We can build on Wilde’s indictment with the useful definitions of sentimentality provided by I. A. Richards, writing rather more impersonally in his 1929 book Practical Criticism. In trying to explain what people mean when they complain that something is sentimental, Richards identifies three subspecies: quantitative sentimentality (“A response is sentimental when it is too great for the occasion”), qualitative sentimentality (“A crude emotion, as opposed to a refined emotion, can be set off by all manner of situations [. . . p]oems which are very ‘moving’ may be negligible or bad”), and a third, somewhat trickier variety:

Sentiments [. . .] are the result of our past interest in the object. For this reason they are apt to persist even when our present interest in the object is changed. For example, a schoolmaster that we discover in later life to have been always a quite unimportant and negligible person may still retain something of his power to overawe us. Again the object itself may change, yet our sentiment towards it not as it was but as it is may so much remain the same that it becomes inappropriate. For example, we may go on living in a certain house although increase in motor traffic has made life there almost insupportable. Conversely, though the object is just what it was, our sentiment towards it may completely change through a strange and little understood influence from other sentiments of later growth. The best example is the pathetic and terrible change that can too often be observed in the sentiments entertained towards the War by men who suffered from it and hated it to the extremist [sic] degree while it was raging. After only ten years they sometimes seem to feel that after all it was “not so bad,” and a Brigadier-General recently told a gathering of Comrades of the Great War that they “must agree that it was the happiest time of their lives.” [. . .] A response is sentimental when, either through the overpersistence of tendencies or through the interaction of sentiments, it is inappropriate to the situation that calls it forth. It becomes inappropriate, as a rule, either by confining itself to one aspect only of the many that the situation can present, or by substituting for it a factitious, illusory situation that may, in extreme cases, have hardly anything in common with it.

Richards finishes his treatment of the topic with the important observation that although we tend to associate sentimentality with an excess of emotion, the real problem is often exactly the opposite:

Most, if not all, sentimental fixations and distortions of feeling are the result of inhibitions, and often when we discuss sentimentality we are looking at the wrong side of the picture. If a man can only think of his childhood as a lost heaven it is probably because he is afraid to think of its other aspects. And those who contrive to look back to the War as “a good time” are probably busy dodging certain other memories.

If the task of a work of art, as the young Wordsworth suggested, is to present ordinary things in an unusual way with the aim of making the audience more alert to and engaged with the experience of existing in the world, then the major challenge that art faces is the fact that people don’t actually WANT to be alert and engaged—at least not for more than a couple of hours at a time, in specific social settings. Such heightened sensitivity swiftly becomes a real pain in the ass: the sort of thing that’s likely to cause us to miss deadlines on quarterly reports and forget to pick the kids up from daycare. We do, however, want to feel the emotional intensity that comes with being alert and engaged—to borrow, as Wilde might say, feelings that we have not earned—and we always find no shortage of lenders. This is the secret to sentimental art’s success; I think we can all appreciate the appeal. And provided we’re able to recognize this stuff for what it is when we’re consuming it (which isn’t always easy) I don’t really see that it deserves to be stamped out, or campaigned against. It’s not particularly valuable, but neither does it do a tremendous amount of harm. It may be dishonest—to no one more than itself—but it isn’t deceitful.

“Abbottabad,” however, is another story. It isn’t sentimental, exactly, although it contains sentimentality. It’s characterized less by its lack of self-consciousness than by its deliberate omission of key context: specifically any reference to what Abbottabad actually is (a British cantonment in the recently-annexed Punjab) or to what Abbott himself is actually doing there (conducting a counterinsurgency campaign to pacify the local population). These are pretty important details, and we can be pretty sure they weren’t omitted by accident—but this is not to say Abbott’s omission of them was deceitful. Power doesn’t often deceive; it doesn’t need to. Instead of making persuasive statements at variance with reality, power determines reality. “Abbottabad” erases the insurgency that Abbott and his men had already suppressed: as described in the poem, the Punjab isn’t a region in conflict, but rather a civilized outpost of the British Empire, where gentlemen write heartfelt poems in observance of significant occasions. In its cloying banality, “Abbottabad” is precisely an assertion of its author’s total control: aside from the flat statement that “coming here was right,” the poem advances no arguments, because there’s nothing to argue. Move along, it says; there’s nothing to see here.

As Richards’ analysis suggests, sentimentality has a political dimension, and therefore a political application. When a work of art—maybe we should just call it a cultural product—operates by taking deliberate advantage of its audience’s sentiments in order to recuperate dissent and reinforce an established social order, then something pernicious is afoot. “Abbottabad,” therefore, is something rather more troublesome than sentimental verse: “Abbottabad” is kitsch.

I hope to get into what kitsch means, exactly—and how kitsch has and hasn’t manifested itself in our national reaction to the killing of Osama Bin Laden—in my next post, which will feature as its special guest star Cairo, the fearless anti-terror dog. Until then . . . happy Independence Day!

A huge translation of hypocrisy, / vilely compiled, profound simplicity.

So—have you seen Anonymous yet? Do you plan to?

Yeah, me neither. I did, however, read Stephen Marche’s “Riff” on the movie in the New York Times Magazine a few weeks ago, and I recommend that you do the same. It’s pretty entertaining, and more importantly it does a good job of articulating what’s objectionable about Anonymous—which is not just that it promotes nonsense about the plays of Shakespeare, but that it doesn’t seem to give much of a damn whether the case it argues against the historical record is accurate or not.

One minor quibble with Marche: at one point he refers to the Oxfordian quasi-scholars (who hold that Edward de Vere was the “real” Shakespeare) as “the prophets of truthiness”—and though he’s absolutely right to evoke truthiness in the context of Anonymous, I think his aim is a little off. Truthiness isn’t being perpetrated, exactly, by the committedly snobbish Oxfordians, whose attempts to braid the stems of a few cherry-picked facts seems quaint and almost respectable by contrast to the film their research has inspired: wrongheaded though they may be, the Oxfordians hold their ground when they’re called out.

Anonymous, by contrast, DOES traffic in something like truthiness—it may be more accurate to call it bullshit—in that its makers are perfectly content, and indeed prefer, to lob irresponsible assertions and then fall back with a shrug, like drunk revelers who shoot pistols in the air at a carnival and melt into the crowd when all the shouting and running starts. Pressed on his film’s fast-and-looseness by NPR’s Renée Montagne, screenwriter John Orloff tap-dances a little about how, y’know, the Shakespeare plays themselves stretch the truth for the sake of a good story—as if depicting Richard III as a hunchback is comparable to suggesting that his reign was entirely the invention of Polydore Vergil—and then says the following:

Montagne only has a few seconds left, but she doesn’t let this pass: um, of COURSE the movie is about who wrote the freaking plays; to suggest otherwise is absurd. Among the jawdropping qualities of Orloff’s vacuous statement—and they are many: I mean, are we seriously supposed to accept that a film that assumes that a regular guy from Stratford couldn’t have had the skillset or the résumé to produce great literature is really about “the process of creativity?” or that the best way to show “how art survives and exists in our society” is by means of a byzantine conspiracy yarn set in late-Elizabethan England?—surely the worst is the maddening implication that Orloff himself may not be totally convinced of the veracity of his own film’s premise, and indeed may not have thought about it all that much. It’s just material to this dude: it’s a pitch, a tagline, designed to stir things up; it possesses and aspires to no more substance than the hook of a pop hit. What if I told you that Shakespeare never wrote a single word! Eh? You see what I did there? Do I have your attention?

But where, one might legitimately ask, is the harm? Does Anonymous really cheapen the culture when the culture is already, y’know, pretty cheap? Does it really make us dumber than we already are?

Yeah, actually, I think it does. Sure, one can (and many will) argue that Anonymous is a net win for Shakespeare (or whomever) and also for the literate culture at large because it will prompt new and closer readership of the work, in much the same way that Dan Brown sent tens of thousands of fresh-minted armchair art historians into museum gift shops and onto the internet in search of Leonardo’s Last Supper. (This seems to be the position that Sony Pictures is taking, promoting the film with a classroom study guide ostensibly intended “to encourage critical thinking by challenging students to examine the theories about the authorship of Shakespeare’s works and to formulate their own opinions,” which sounds just excruciatingly fair-’n’-balanced to me.) Sorry, but I just don’t buy this argument. Yeah, maybe there’s value to motivating attention toward works that have become inert from neglect or (and?) over-familiarity, but I see approximately zero evidence that the works of Shakespeare have been neglected of late, nor any sign that their reception has grown inert. To the extent that Anonymous introduces the works of the Bard to a new audience, it introduces those works not as artifacts of a superlatively imaginative human consciousness using language to engage an audience, to curry political favor, to struggle with major questions of existence, to earn cash and prestige, and to tell an ascendant nation complex stories about itself, but rather as an already-cracked code: an enormous crossword puzzle with all the letters already filled in and all the clues missing. Now, don’t get me wrong, I can be as postmodern as the next guy when it comes to issues of interpretation . . . but I also try to be pragmatic on such questions, and the ultimate rubric in a case like this one is probably whether the works being interpreted become more interesting or less interesting when viewed through the lens of the theory under consideration. The Oxfordian hypothesis does not perform well on this particular racetrack, and Anonymous can barely roll itself out of the pit.

Okay, then, lit snob (one might also legitimately ask): since you brought up Dan Brown, how is Anonymous any worse than The Da Vinci Code? Or, for that matter, worse than JFK? Now that the guy who directed Independence Day is crapping on your precious Shakespeare you’re urging the troops to the battlements, but where were you when those other conspiracies were getting mongered, huh? You’re saying Anonymous is different somehow?

Yup, pretty much: different and worse, for a number of reasons. There is, first of all, the matter of Anonymous’s target selection. While Dan Brown and Oliver Stone seek to encourage suspicion of powerful and entrenched institutions that have earned close scrutiny and that frankly can afford to take the hit—Roman Christianity and the American military-industrial complex, respectively—I’m not sure what corrupt institution Anonymous aims to disinfect with daylight. No matter how you felt while trying to memorize Romeo’s But, soft! what light through yonder window breaks speech back in middle school, Shakespeare isn’t really oppressing anybody. Opulent and hidebound though it may be, the British monarchy isn’t exactly being propped up by the plays of Shakespeare. And if the villain here is supposed to be the academic establishment—I’m imagining a scrapped preview trailer set at the MLA Conference, featuring cloaked adjunct professors darkly muttering stuff like our secrets must be preserved! in the incense-befogged corridors of a Midwestern convention center—well, to me that seems a little like bullying the skinny, bespectacled dork on the playground.

I tend to roll my eyes at tales like Brown’s and Stone’s, but they don’t make me nervous. When conspiracy stories start accusing groups that are relatively powerless in practical terms of hiding the truth, or perpetrating hoaxes, or exerting occult and improper influence over the unsuspecting rabble, then I feel obliged to start clearing my throat. If the implicated groups are made up of harmless managerial-class dissenters like Shakespeare scholars (or for that matter environmental activists, the favored late-90s-post-Communist-pre-terrorist bogeymen of contemptible hacks like Tom Clancy and Michael Crichton: a particularly hilarious premise for those of us who actually know environmental activists, and understand that they’re unlikely to accomplish a successful mass mailing, never mind world domination), then I think it’s sufficient to simply mock the offending conspiracy yarn, the way I’m mocking Anonymous now. When the alleged conspirators are groups that are broadly disempowered, and are defined by ethnicity, religion, gender identity, national origin, etc. . . . well, then it’s not really funny anymore, is it? I’m not remotely suggesting that Anonymous is guilty of that—but the irresponsibility of its approach is the same kind of irresponsibility.

There is also, with Anonymous, the problem of narrative mode: i.e. the manner in which screenwriter Orloff and director Roland Emmerich choose to present their tale. More specifically, Anonymous dispenses with a frame narrative—which is to say the story of de Vere’s conspiracy is the only story it has to tell. Terrible though it may be, The Da Vinci Code doesn’t suffer this shortcoming: Dan Brown has the good sense to keep his pseudohistorical esoterica strictly confined to his book’s backstory, while all the action in the present involves the unmistakably fictional adventures of his Indiana-Holmesian protagonist. (In other words, while Mary Magdalene—understood by Christians to have been an actual person—is a key figure in the book’s plot, she is not a character in the book.) Although JFK is played in a far more urgent and sincere key, we should note that it too uses a frame narrative: it’s presented (at least initially) not as the story of a conspiracy to murder President Kennedy, but of the efforts of Orleans Parish District Attorney Jim Garrison to uncover and prove that conspiracy. The closest thing to a frame narrative Anonymous has, however, is the what-if-I-told-you teaser prologue spoken by Derek Jacobi. (Who also played the non-diegetic narrating Chorus in Kenneth Branagh’s Henry V! And who’s also an outspoken Oxfordian! See? Evidence accumulates!)

This absence of a frame narrative might not seem like a big deal, but it is. In a conspiracy yarn, the use of a frame accomplishes a couple of important things: first, it creates a point of entry for the audience, a detective character who’s (almost) as ignorant of the conspiracy as we are, and who’ll walk us through it as she or he figures it all out. While this character’s constant exclamations of eureka! and/or this goes all the way to the top! may grow tiresome, they perform the useful function of signposting the audience’s own reception of the conspiracy as it unravels. Second, a frame narrative allows some critical daylight to creep between the story and the peculiar theories that make up its content. This fictional ambiguity—an ambiguity that’s present in the audience’s experience of the narrative, not the kind that materializes outside it, when some jackass screenwriter backpedals in an interview—has the effect of critically engaging us, and making us work to get out in front of the story. Is this conspiracy just something that the main characters believe in, or is it literally true in the world of the narrative? Is this story pulling our legs, or do its makers really expect us to buy into this stuff?

Thanks to its frame, The Da Vinci Code can function effectively even for readers who aren’t prepared to get on board with its swipe at orthodox Christianity; I think we can safely assume that the 80 million people who own a copy haven’t wholeheartedly embraced the gnostic gospels. In purely functional terms, Dan Brown’s conspiracies are MacGuffins; the linchpin of his plot could be the Golden Fleece or the toothbrush of Odin as easily as the Holy Grail. JFK—which is both more overheated and more serious about what it’s doing—handles its subject conspiracy in roughly the opposite way: as it becomes clearer and clearer that not only the fictionalized Jim Garrison but also the film itself both really believe and really want us to believe that the historical JFK assassination was in reality a vast antidemocratic plot, the Garrison narrative begins to recede and collapse (along with Costner’s accent—zing!), and the illusionistic continuity of the fictional world is repeatedly ruptured.

Oliver Stone famously characterized JFK as a “counter-myth” to the official account, which it really isn’t: it’s a fictional depiction of the Clay Shaw trial that gradually transforms into a polemical documentary heavy on speculative reenactments. The term “counter-myth” could be better applied to the all-but-frameless Anonymous, which doesn’t bother to depict the uncovering of the Oxfordian conspiracy, but only the conspiracy itself. Anonymous doesn’t argue for de Vere’s authorship of the plays, nor does it display any understanding that that’s the sort of thing that might need to be argued for; instead it just presents de Vere’s authorship as part of a series of plot events, which may or may not correspond to some extra-filmic historical record. Were I convinced of Anonymous’s sincerity, I’d be inclined to regard it in sort of the same way I do contemporary Christian music—i.e. I’m not especially inclined to entertain its initial assumptions, I’m irritated that it seems to assume that I am, and I’m therefore not able to set those considerations aside and just enjoy the craftsmanship of the product, such as it is—but Anonymous is not sincere. And were I convinced of its insincerity—if I thought its aim was just to make a little mischief with history and literature, in a manner akin to that of, say, Shakespeare in Love (which fills in gaps in the Bard’s scant biography without coloring outside the lines), or monumental goofs like Abraham Lincoln, Vampire Hunter, or even an honest-to-god masterpiece like Brad Neely’s (NSFW!) “George Washington”—well, then I could muster some respect for it as harmless entertainment, something clearly not intended to mislead or confuse anybody.

But with Anonymous, of course, sincerity and insincerity aren’t even on the table. It doesn’t make sense to ask whether the film really believes the story it’s telling, because the film itself doesn’t know and doesn’t care. In keeping with its extraordinary lack of narrative and rhetorical ambition, its only goals are functional: it wants the extra jolt of adrenal seriousness that comes from rooting its story in supposed real-world events, but it’s unwilling to surrender the freedom to make stuff up . . . and its bookkeeping regarding what among its contents is factual versus speculative versus full-on fictional appears to be slapdash and/or nonexistent.

That being said, I might still be willing to let Anonymous pass in silence if irresponsibility were not so central to its project. I thought, for instance, that Braveheart was pretty stupid on the whole, but at least its tagline was “Every man dies, not every man really lives,” rather than “What if William Wallace was the illegitimate father of Edward III?” I guess I’m also bugged by the fact that unlike other conspiracy potboilers, which generally take historical events as their raw material, Anonymous is a dramatic work that wants to function as a gloss on yet another body of dramatic work, essentially laying its cuckoo’s egg in the well-feathered Shakespearean nest; this amounts not to boldness but to laziness. It also seems to position Anonymous as a successor to, and possibly a substitute for, the plays of Shakespeare: all that gnarly iambic pentameter sure is tough to parse, but thanks to Anonymous we now know that it’s just a bunch of coded propaganda intended to sway contemporary court intrigues, and we can comfortably interpret it—and dismiss it—as such.

Parting shot, disguised as a clarification: in the foregoing paragraphs I have made comparisons between Anonymous and conspiracy stories like The Da Vinci Code and JFK to the detriment of the former; I hope I have been clear that my intention has NOT been to endorse the latter. Conspiracies are like the potato chips of the narrative food pyramid: very appealing, fleetingly satisfying, and nutritionally void. Unless a particular conspiracy story is robustly, voluptuously fictional—willfully impossible to take seriously, and therefore more concerned with the nature of truth and knowledge than with its chosen posited cabal or plot (I’m thinking here of a family of works that includes The Crying of Lot 49 and Foucault’s Pendulum, Twin Peaks and The X Files)—then its contribution to the culture is probably a net negative. Even if their intentions are honorable, such stories always encourage us to think of history as something to which we’re spectators: a few of us can rattle off all the players’ stats from memory, while most of us spend the whole game trying to flag down a beer vendor, but all of us are stuck in the stands while the real action happens on the field.

This is not an accurate or a productive way to understand the world. The course of history is not generally set by small groups of scheming individuals, but rather by enormous impersonal institutions; we are not passive subjects, but active and implicated (if individually powerless, and generally unthinking) participants. Power is invisible, sure enough, but it doesn’t maintain its invisibility by hiding; it doesn’t have to. We willfully avert our eyes from it, or we fail to see it when we’re looking right at it.

In a piece that appeared in Z in 2004, Michael Albert does an admirable job of explaining the appeal and the limitations of conspiracy theory; he also presents an instructive contrast between it and what’s often called institutional analysis. I suspect—and hope—that his piece has been widely circulated among the campers in Zuccotti Park, McPherson Square, Frank Ogawa Plaza, and Seattle Central Community College as they collectively plan their next move. The Occupy protestors’ habit of identifying a particular human sin (i.e. greed) and/or a small group of individuals (i.e. the One Percent) as the perpetrators of our present international crisis has been rhetorically effective, but it’s kind of a philosophical dead end: sure, there are indeed scoundrels out there with a lot to answer for, but rather than heating up the pine tar and gathering the feathers, now seems like a good time to focus on the complex unmonitored systems that empowered and encouraged those scoundrels, and maybe even to try fostering some kind of broad and serious national conversation about the way we assign value to things. To pick up that dropped baseball metaphor, rather than dissecting the weaknesses of the visiting team, or speculating about who’s been doping, it may be time to consider reassessing the rulebook and redesigning the ballpark.

If we’re to stand any kind of chance of doing that successfully—of doing much of anything successfully—we’ll have to cultivate and safeguard our capacity to sort through facts. And by facts I mean, y’know, facts: independently verifiable data about conditions and circumstances, causes and effects. You can’t make policy without facts; not honestly, anyway. Twenty-odd years of a pretty-much-constantly ballooning economy proved to be a golden era for postmodern ideologues both left and right (although the pomo left mostly seems to have used its rhetorical chops for seducing impressionable undergrads, while the pomo right mostly used theirs to, like, invade Iraq and stuff), and made this notion easy to deny or forget. The assumption always seemed to be that the value of ideas, just like everything else, is best proved in the consumer marketplace: what’s true is what polls best, what goes viral, what pulls in the best ratings, and to suggest otherwise was to reveal oneself as a member of the pathetically outmoded “reality-based community.” (That’s a shot at Dubya, of course, but Clinton governed more or less the same way.)

And this cavalier disinterest in facts, of course, brings me back to Anonymous. What’s most troublesome about the movie isn’t that it’s a 130-minute-long lie, it’s that it doesn’t bother to lie: unlike the Oxfordians, who make their feeble case by means of citation, quotation, and coincidence, Anonymous aims to convince via bald-faced assertion amped up with fancy production design and ample CGI. Anonymous seems to suggest—even to declare, by means of its de-Vere-as-Shakespeare-as-propagandist premise—that this is how ALL history is made: not by contest or argument, but by spin and obfuscation and special effect. This thesis might not always be mistaken, but it should never be regarded as acceptable.

Wait wait wait wait, some of you are now saying. You seriously just blew 3500 words trash-talking a movie you admit you HAVEN’T SEEN? If you haven’t seen it, how do you know it’s bad? To which I’ll respond—just as I have said in the past—that whether the movie is any good has nothing to do with the point I’m making. I’m not saying Anonymous is BAD. I’m saying it’s EVIL. Dig?

In other, me-related news, the latest victim of my short-fiction campaign is Joyland, the awesome web-based literary magazine published by Emily Schultz and Brian Joseph Davis. If you aren’t familiar with it, Joyland is to my knowledge unique among litmags in that it publishes work from throughout North America, but is curated regionally by several geographically-dispersed editors. As a current Chicagoan, my story falls in the domain of Midwest editor Charles MacLeod, and it seems appropriate to thank him for encouraging me to get off my ass and send him something. (K and I know Charles from our extended honeymoon in Provincetown.)

The story of mine that’s up at Joyland is called “Seven Names for Missing Cats;” it came about while I was in grad school in early 2004. I was studying at the time with the novelist Jane Alison, who is a genius of the highest order and who is very good at fostering an atmosphere that’s conducive—at least it was for me—to reassessing the core principles underlying whatever it is you think you’ve been doing, writer-wise. With “Missing Cats,” the object of the game was to write a story that omitted as many standard narrative operations as possible, or anyway left them up to the reader to create, or infer; it’s probably the thing I’ve written that I am most happy with, and I’m grateful to Joyland for giving it a home.